Many districts have made real progress with three-dimensional science instruction. Students are being asked to explain phenomena, work with evidence, and think more deeply about how science works.

The harder question now is whether assessments are keeping up.

That is where many teams get stuck. A question can sound aligned to a Science and Engineering Practice and still fall short of actually measuring it. NGSS guidance makes an important distinction here: students demonstrate science and engineering practices in the context of content, not in isolation. Strong multidimensional questions are meant to reveal sensemaking, not just recognition or recall.

What a weaker question gets wrong

For a middle school example, look at MS-LS2-1, where students are expected to analyze and interpret data to provide evidence for the effects of resource availability on organisms and populations in an ecosystem.

The goal is not just for students to read a graph. The goal is for them to use data to notice patterns, make sense of what is happening, and support an evidence-based idea.

A weaker question might ask: In which month was the rabbit population highest? Even if that question includes a graph, it only asks students to pull a value. Students are reading data, but they are not really analyzing it.

A stronger question might ask: The graph shows rainfall, grass growth, and rabbit population over six months. What pattern in the data best explains the change in the rabbit population? Use evidence from at least two data sets to support your answer.

This version is stronger because students have to interpret relationships in the data and use those relationships as evidence. That is much closer to what the PE and the SEP are actually asking students to do.

Why this matters more than it seems

If a question only asks students to define a practice or identify what scientists do, it may tell you something about vocabulary familiarity. What it does not tell you very well is whether students can apply the practice in a meaningful context.

That matters for instruction, but it also matters for leadership decisions. If district teams are reviewing assessment quality, choosing resources, or trying to understand why students seem more successful in class than on a formal task, this is one of the first places to look. This is the difference between a question that sounds three-dimensional and one that actually generates evidence of three-dimensional learning.

Three signs a question is doing the real work

Strong SEP assessment does not have to be overly complicated, but it does need a few things in place.

Students have something to work with.

The practice does the work.

The answer cannot come from recall alone.

One fast question to ask during SEP review

“If we removed the graph, model, data, or phenomenon, could students still answer correctly from memory alone?”

If the answer is yes, the question may label the practice more than it actually elicits it.

That does not mean the question has no value. Some questions are still useful for building background knowledge or checking terminology. But if the goal is to assess Science and Engineering Practices, leaders need to know whether the question is truly asking students to use the practice in context.

What leaders can do next

The good news is that this does not require a full reset.

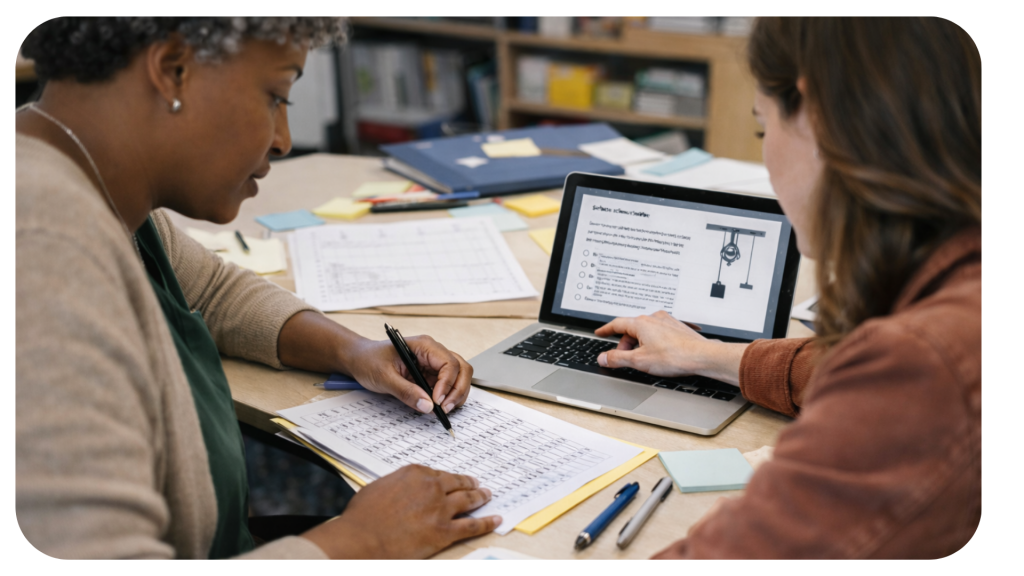

Most districts already have a strong starting point because teachers have been doing the harder instructional work of shifting toward three-dimensional learning. The next step is not starting from scratch. It is reviewing assessment questions with a clearer lens.

That can mean comparing questions that only mention a practice with questions that actually require it, revising a few high-priority questions instead of rebuilding everything, giving teams a short shared checklist for review, or using one strong example to anchor PLC or curriculum conversations.

Stronger SEP assessment is not about making questions harder. It is about making student thinking more visible. And when that happens, teachers and leaders get better information to support the next step in learning.